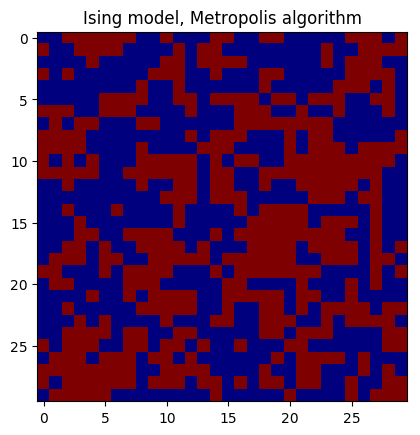

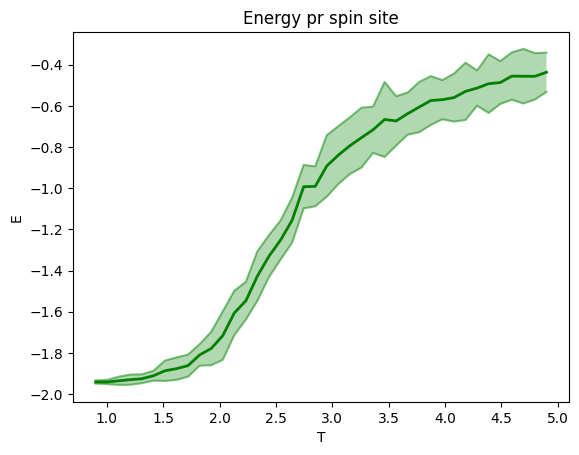

This model simulates ferromagnetism. Neighboring electrons affect each other through magnetic fields generated by their spins. The overall potential energy is reduced when neighboring electron spin point in the same direction.The 2D Ising model sets up a square lattice with each site representing an electron spin with direction up +1 or down -1. Ferromagnetic behavior shows when there's a majority of spin either up or down, which can happen at a low temperature. At high temperature the thermal disturbances will randomize the direction of the magnetic dipoles(electron spin) such that the magnet becomes a paramagnet, losing its ferromagnetic properties. Multiprocessing has been used for the parallel processes, giving significant speedup.

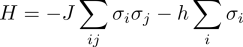

The Hamiltonian of the system is given by

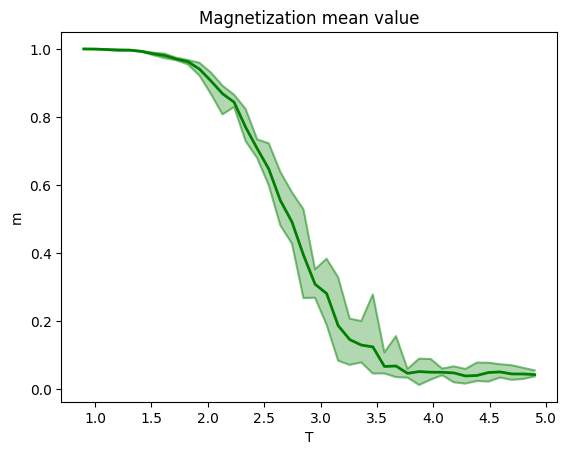

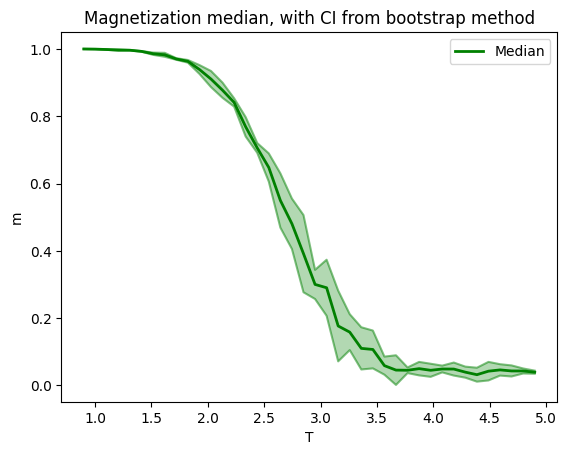

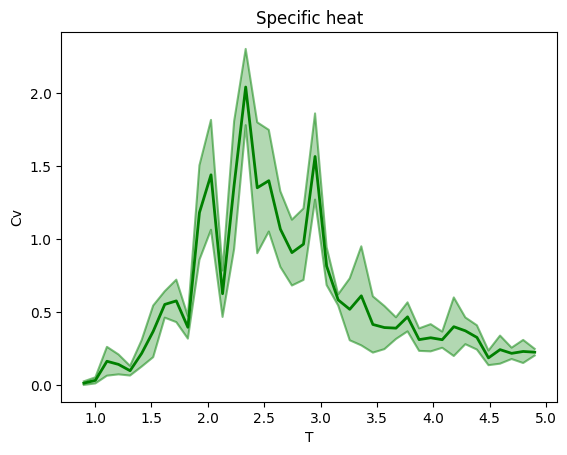

The magnetization is a measure of alignment and order, and therefore strength of the magnetic field from the magnet. Magnetization can be negative or positive depending on which way the macroscopic field is oriented.Magnization is higher at low T, if you start from an ordered state, than at high T, since the thermal disturbances will break alignment.

The magnetization pr. site is given by

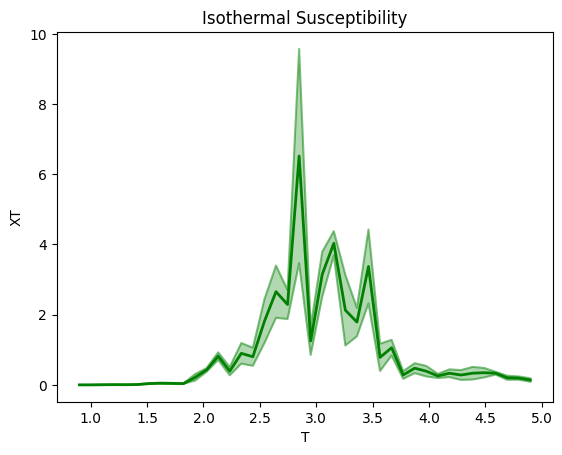

The susceptibility is a measure for how the material reacts to an external magnetic field. If x_v is positive, the material is more paramagnetic, and the dipoles inside can align to a greater degreewith the external field. The models gives low susceptibility for low T, since the dipoles are firm in their arrangement. Higher T will make the dipoles more free to be influenced by an external field, but,too high T means the thermal disturbances will be too strong to give any significant alignment with an external field.

The magnetic susceptibility can be found from the variance of the magnetization

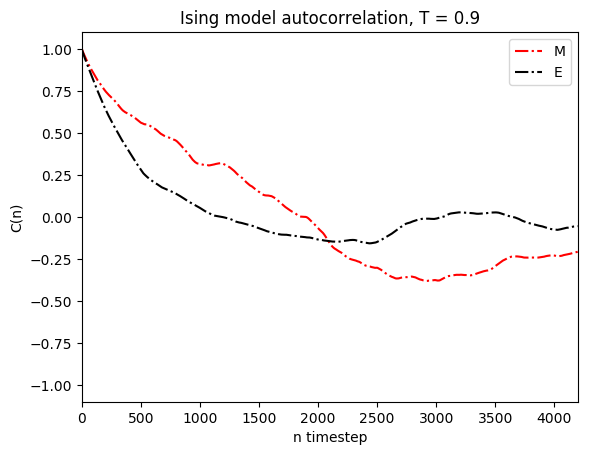

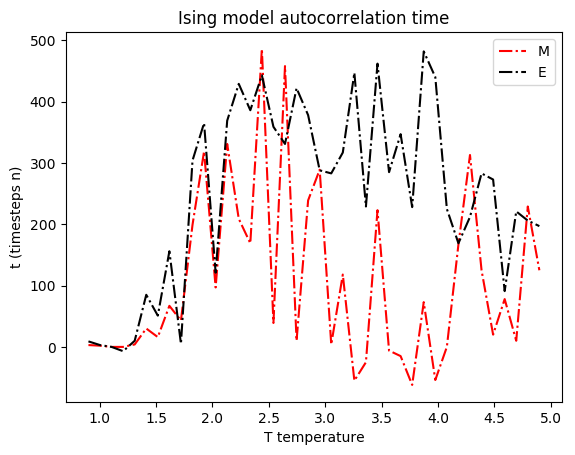

Autocorrelation

To have meaningful average values we need independent and uncorrelated data. Since we change the spin states one site at a time, consecutive states are highly correlated and thus any statistics aswell. Looking at the autocorrelation times we get a sense of how many steps should be between each measurement.Two statistics can have different correlation times, so the safer practice is to pick the longest correlation time. For large t values we're calculating a large lag. This also means that the larger lag autocorrelation we calculate, the more error-prone it is, since we'll have fewer points to calculate the autocorrelation from. Hence, first we will see autocorrelation drop off approximately exponentially, but then the lack of measurements will start to create noise in the autocorrelation function, making wild oscillations and peaks etc. So here we cut off the autocorrelation plot at 0.7*tmax, so the noisy tail isn't visible.Integrating the autocorrelation values gives the integrated autocorrelation time. In a sense it's a more direct way to get the parameter for exponential decay compared to fitting and doing least squares etc. The integrated autocorrelation time calculated is then used in collecting data to avoid correlated data.